How to Build Agentic Workflows: The 3-Layer Architecture

This framework is based on the teachings of Nick Saraev, who has been building and refining agentic AI systems for years.

If you let AI handle everything end-to-end, errors compound. 90% accuracy per step means 59% success over 5 steps. That is not production-ready. That is a coin flip.

The solution: separate concerns. Let AI make decisions while deterministic code handles execution.

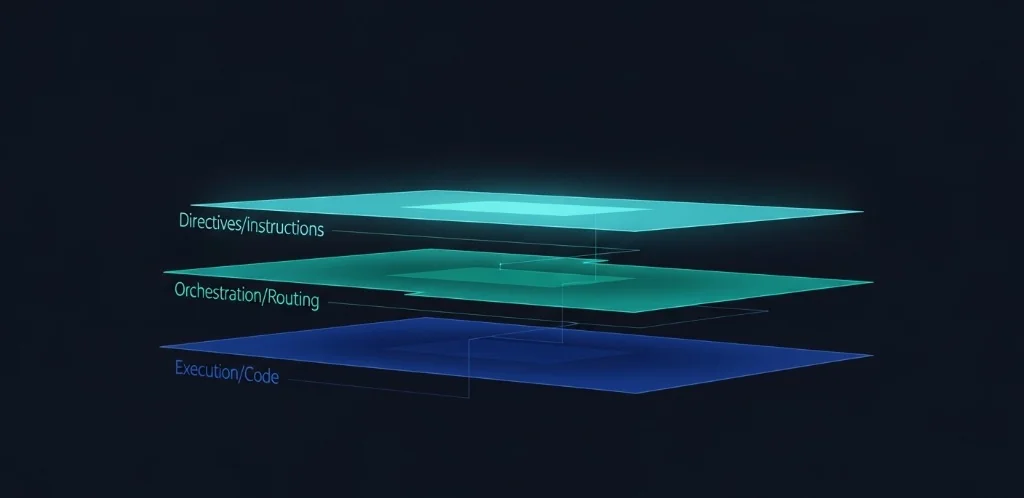

The 3-Layer Architecture

Every agentic workflow has three components:

- Layer 1: Directives - SOPs written in markdown defining what to do

- Layer 2: Orchestration - AI handles intelligent routing and decision-making

- Layer 3: Execution - Python scripts handle deterministic tasks

The AI sits between human intent (directives) and deterministic execution (scripts). It reads instructions, makes decisions, calls tools, handles errors, and improves the system over time.

Layer 1: Directives

Directives are instruction sets for the AI. Store them in a directives/ folder as markdown files.

Each directive needs:

- Clear goals and expected outputs

- Required inputs

- List of available tools

- Edge cases and exceptions

- Descriptive file naming

Write directives like you would brief a mid-level employee. Plain language. No code. Focus on the "what" and "why," not the "how."

Example directive structure:

## Goal

Scrape competitor pricing and update our comparison sheet.

## Inputs

- List of competitor URLs (from user)

- Target Google Sheet ID

## Tools

- execution/scrape_pricing.py

- execution/update_sheet.py

## Edge Cases

- If site blocks scraping, use cached data from last run

- If price format differs, normalize to USDLayer 2: Orchestration

The orchestration layer is the AI itself. Its job is intelligent routing.

The AI should:

- Read the relevant directive for the task

- Identify required inputs

- Call execution scripts in the correct order

- Handle errors and retry when appropriate

- Update directives with new learnings

The AI does not execute tasks directly. It does not write web scrapers on the fly. It does not call APIs manually. It reads the directive and calls the appropriate execution script.

This constraint is the key insight. By limiting AI to routing decisions, you contain its probabilistic nature. The execution remains deterministic.

Layer 3: Execution

Execution scripts live in an execution/ folder. Each script handles one specific task.

Characteristics of good execution scripts:

- Single responsibility - one script, one job

- Clear inputs and outputs

- Structured error handling

- Environment variables for credentials

- Testable in isolation

Scripts handle: API calls, data processing, file operations, database interactions, external service integrations.

The goal is reliability. A script should work the same way every time given the same inputs. If something varies, that variation gets pushed up to the orchestration layer.

Setting Up Your Workspace

Minimum folder structure:

project/

├── directives/ # Markdown SOPs

├── execution/ # Python scripts

├── .tmp/ # Intermediate files (gitignored)

├── .env # API keys and credentials

└── agents.md # System context for AIThe agents.md file (or claude.md for Claude Code) contains instructions the AI reads at the start of every session. It explains the 3-layer architecture and operating principles.

Key principle: Local files are only for processing. Deliverables live in cloud services where users access them. Everything in .tmp/ is regenerated as needed.

The Self-Annealing Loop

Errors make the system stronger when handled correctly.

When something breaks:

- Read the error message and stack trace

- Fix the execution script

- Test the fix

- Update the directive with what you learned

- System is now more robust

If you hit an API rate limit, you investigate the API, find a batch endpoint, rewrite the script to use it, test, and update the directive to note the limit.

Directives are living documents. They improve with every failure. Over time, your directives accumulate the edge cases and solutions that make workflows reliable.

Operating Principles

Check for tools first. Before writing a new script, check execution/ for existing tools. Only create new scripts when nothing exists.

Let AI handle judgment. Let code handle execution. AI is good at context, routing, and decisions. Code is good at consistent, repeatable actions. Respect the boundary.

Update directives as you learn. API constraints, better approaches, common errors, timing expectations - all belong in directives. But do not overwrite directives without reason. They are the instruction set.

Deliverables go to the cloud. Users access outputs in Google Sheets, Slides, or other cloud services. Local files are processing artifacts.

Example Workflow

Task: Generate a personalized outreach email for a prospect.

Directive: directives/generate_outreach.md defines the goal, required prospect data, writing guidelines, and output format.

Orchestration: AI reads the directive, calls execution/research_prospect.py to gather company data, then calls execution/generate_email.py with the research as input.

Execution: research_prospect.py hits LinkedIn and company website APIs. generate_email.py uses a template with the research data to produce the final email.

The AI made two decisions: which scripts to call and in what order. The scripts did the deterministic work. Errors in either script are isolated and fixable without affecting the other.

Why This Works

The 3-layer architecture solves the fundamental tension in agentic AI: you want autonomous execution, but LLMs are not reliable enough for multi-step tasks.

By containing AI to the orchestration layer, you get:

- Deterministic execution that works the same every time

- Isolated failures that do not cascade

- Testable components you trust

- A system that improves with every error

The agentic AI future is not about giving AI more autonomy. It is about giving AI the right autonomy: decisions yes, execution no.

Build your workflows on this foundation and they will scale.

Related Articles

Ready to Automate Your Business?

Book a free consultation to discuss how AI automation can save you 40+ hours per month.

Book Free Consultation